Intel's Ivy Bridge Architecture Exposed

by Anand Lal Shimpi on September 17, 2011 2:00 AM EST- Posted in

- CPUs

- Intel

- Ivy Bridge

- IDF 2011

- Trade Shows

The New GPU

Westmere marked a change in the way Intel approached integrated graphics. The GPU was moved onto the CPU package and used an n-1 manufacturing process (45nm when the CPU was 32nm). Performance improved but it still wasn't exactly what we'd call acceptable.

Sandy Bridge brought a completely redesigned GPU core onto the processor die itself. As a co-resident of the CPU, the GPU was treated as somewhat of an equal - both processors were built on the same 32nm process.

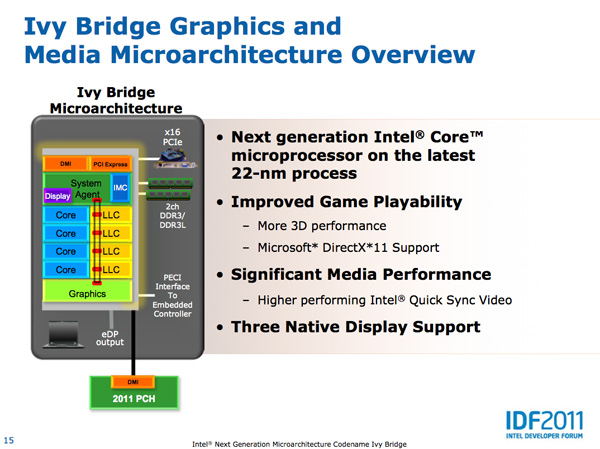

With Ivy Bridge the GPU remains on die but it grows more than the CPU does this generation. Intel isn't disclosing the die split but there are more execution units this round (16 up from 12 in SNB) so it would appear as if the GPU occupies a greater percentage of the die than it did last generation. It's not near a 50/50 split yet, but it's continued indication that Intel is taking GPU performance seriously.

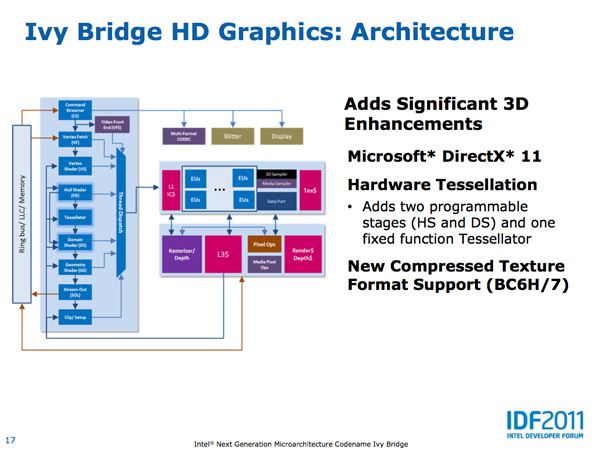

The Ivy Bridge GPU adds support for OpenCL 1.1, DirectX 11 and OpenGL 3.1. This will finally bring Intel's GPU feature set on par with AMD's. Ivy also adds three display outputs (up from two in Sandy Bridge). Finally, Ivy Bridge improves anisotropic filtering quality. As Intel Fellow Tom Piazza put it, "we now draw circles instead of flower petals" referring to image output from the famous AF tester.

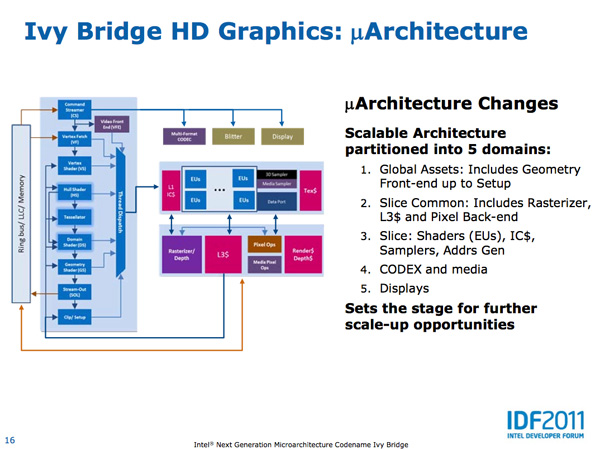

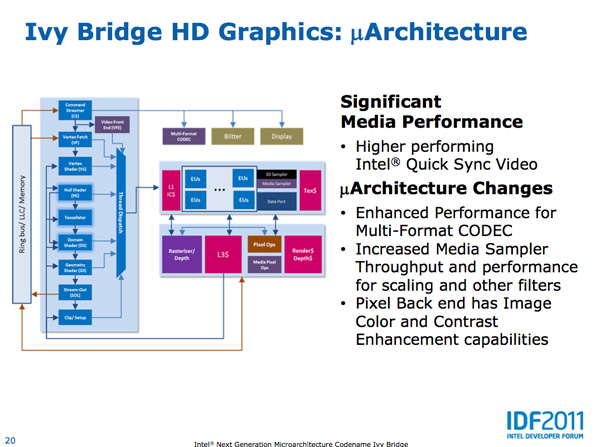

Intel made the Ivy Bridge GPU more modular than before. In SNB there were two GPU configurations: GT1 and GT2. Sandy Bridge's GT1 had 6 EUs (shaders/cores/execution units) while GT2 had 12 EUs, both configurations had one texture sampler. Ivy Bridge was designed to scale up and down more easily. GT2 has 16 EUs and 2 texture samplers, while GT1 has an unknown number of EUs (I'd assume 8) and 1 texture sampler.

I mentioned that Ivy Bridge was designed to scale up, unfortunately that upwards scaling won't be happening in IVB - GT2 will be the fastest configuration available. The implication is that Intel had plans for IVB with a beefier GPU but it didn't make the cut. Perhaps we will see that change in Haswell.

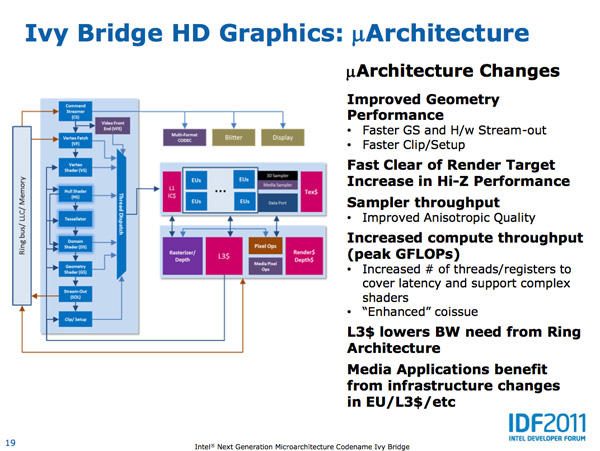

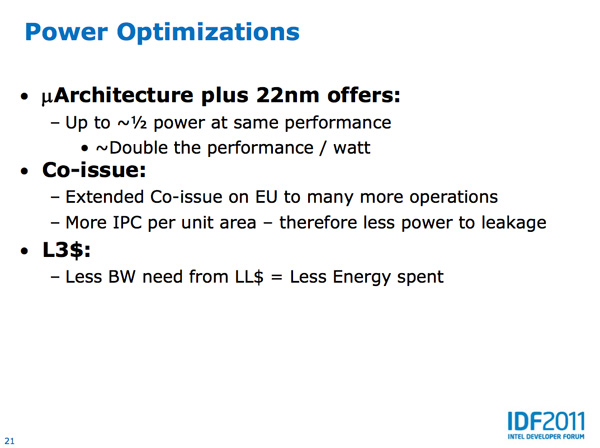

As we've already mentioned, Intel is increasing the number of EUs in Ivy Bridge however these EUs are much better performers than their predecessors. Sandy Bridge's EUs could co-issue MADs and transcendental operations, Ivy Bridge can do twice as many MADs per clock. As a result, a single Ivy Bridge EU gets close to twice the IPC of a Sandy Bridge EU - in other words, you're looking at nearly 2x the GFLOPS in shader bound operations as Sandy Bridge per EU. Combine that with more EUs in Ivy Bridge and this is where the bulk of the up-to-60% increase in GPU performance comes from.

Intel also added a graphics-specific L3 cache within Ivy Bridge. Despite being able to share the CPU's L3 cache, a smaller cache located within the graphics core allows frequently accessed data to be accessed without firing up the ring bus.

There are other performance enhancements within the shader core. Scatter & gather operations now execute 32x faster than Sandy Bridge, which has implications for both GPU compute and general 3D gaming performance.

Despite the focus on performance, Intel actually reduced the GPU clock in Ivy Bridge. It now runs at up to 95% of the SNB GPU clock, at a lower voltage, while offering much higher performance. Thanks primarily to Intel's 22nm process (the aforementioned architectural improvements help as well), GPU performance per watt nearly doubles over Sandy Bridge. In our Llano review we found that AMD delivered much longer battery life in games (nearly 2x SNB) - Ivy Bridge should be able to help address this.

Quick Sync Performance Improved

With Sandy Bridge Intel introduced an extremely high performing hardware video transcode engine called Quick Sync. The solution ended up delivering the best combination of image quality and performance of any available hardware accelerated transcoding options from AMD, Intel and NVIDIA. Quick Sync leverages a combination of fixed function hardware, IVB's video decode engine and the EU array.

The increase in EUs and improvements to their throughput both contribute to increases in Quick Sync transcoding performance. Presumably Intel has also done some work on the decode side as well, which is actually one of the reasons Sandy Bridge was so fast at transcoding video. The combination of all of this results in up to 2x the video transcoding performance of Sandy Bridge. There's also the option of seeing less of a performance increase but delivering better image quality.

I've complained in the past about the lack of free transcoding applications (e.g. Handbrake, x264) that support Quick Sync. I suspect things will be better upon Ivy Bridge's arrival.

97 Comments

View All Comments

Arnulf - Sunday, September 18, 2011 - link

"Voltage changes have a cubic affect on power, so even a small reduction here can have a tangible impact."P = V^2/R

Quadratic relationship, rather than cubic ?

damianrobertjones - Sunday, September 18, 2011 - link

" As we've already seen, introducing a 35W quad-core part could enable Apple (and other OEMs) to ship a quad-core IVB in a 13-inch system."Is Apple the only company that can release a 13" system?

medi01 - Monday, September 19, 2011 - link

No. But it's the only one that absolutely needs to be commented on in orgasmic tone in US press (and big chunk of EU press too)JonnyDough - Monday, September 19, 2011 - link

They're the only ones who will market it with a flashy Apple logo light on a pretty aluminum case. Everyone knows that lightweight pretty aluminum cases are a great investment on a system that is outdated after just a few years. I wish Apple would make cars instead of PCs so we could bring the DeLorean back. Something about that stainless steel body just gets me so hot. Sure, it would get horrible gas mileage and be less safe in an accident. But it's just so pretty! Plus, although it would use a standard engine made by Ford or GM under the hood, its drivers would SWEAR that Apple builds its own superior hardware!cldudley - Sunday, September 18, 2011 - link

Am I the only one who thinks Intel is really wasting a lot of time and money on improvements to their on-die GPU? They keep adding features and improvements to the onboard video, right up to including DirectX 11 support, but isn't this really all an excersise in futility?Ultimately a GPU integrated with the CPU is going to be bottlenecked by the simple fact that it does not have access to any local memory of it's own. Every time it rasterizes a triangle or performs a texture operation, it is doing it through the same memory bus the CPU is using to fetch instructions, read and write data, etc.

I read that the GPU is taking a larger proportion of the die space in Ivy Bridge, and all I see is a tragic waste of space that would have been better put into another (pair of?) core or more L1/L2 cache.

I can see the purpose of integrated graphics in the lowest-end SKUs for budget builds, and there are certainly power and TDP advantages, and things like Quick-Sync are a great idea, but why stuff a GPU in a high-end processor that will be blown away by a comparatively middle-of-the-road discrete GPU?

Death666Angel - Sunday, September 18, 2011 - link

I disagree. AMD has shown that on-die GPUs can already compete with middle-of-the-road discrete graphics in notebooks. Trinity will probably take on middle-of-the-road in the current desktop space.Your memory bandwidth argument also doesn't seem to be correct, either. Except for some AMD mainboard graphics with dedicated sideport memory, all IGPs use the RAM, but a lot of them are doing fine. It is also nice to finally see higher clocked RAM be taken advantage of (see Llano 1666MHz vs 1800MHz). DDR4 will add bandwidth as well.

Once the bandwidth becomes a bottleneck, you can address that, but at the moment Intel doesn't seem to be there, yet, so they keep addressing their other GPU issues. What is wrong with that?

Also, how many people who buy high-end CPUs end up gaming 90% of the time on them? A lot of people need high-end CPUs for work related stuff, coding, CAD etc. Why should they have to buy a discrete graphics card?

Overall, you are doing a lot of generalization and you don't take into account quite a few things. :-)

cldudley - Sunday, September 18, 2011 - link

Ironically I spend lots of time in AutoCAD, and a discrete graphics board makes a tremendous difference. Gamer-grade stuff is usually not the best thing in that arena though, it needs to be the special "workstation" cards, which have very different drivers. Quadro or FireGL.I agree with you on the work usage, and gaming workloads not being 90% of the time, but on the other hand,workstations tend to have Xeons in them, with discrete graphics cards.

platedslicer - Sunday, September 18, 2011 - link

As a fraction of the computer market, buyers who want power over everything else have plunged. Mobility is so important for OEMs now that fitting already-existent performance levels into smaller, cheaper devices becomes more important than pushing the envelope. I still remember a time when hardly anybody gave a rat's ass about how much power a CPU consumed as long as it didn't melt down. Today, power consumption is a crucial factor due to battery life and heat.Personally these developments make me rather sad, partly because I like ever-shinier games, and (more importantly) because seeing the unwashed masses talk about computers as if they were clothing brands makes me want to rip out their throats. That's how the world works, though. Hopefully the chip makers will realize that there's still a market for power over fluff.

Looking at it on the bright side, CPU power stagnation might make game designers pay more attention to content. Hey, you have to look on the bright side of life.

KPOM - Monday, September 19, 2011 - link

I think that's largely because for the average consumer, PCs have reached the point where CPU capabilities are no longer the bottleneck. Look at the success of the 2010 MacBook Air, which had a slow C2D but a speedy SSD, and sold well enough to last into mid-2011. Games are the next major hurdle, but that's the GPU rather than the CPU, and hence the reason it receives a bigger focus in Ivy Bridge (as it also did in Sandy Bridge compared to Westmere).The emphasis now is having the power we have last longer and be available in smaller, more portable devices.

JonnyDough - Monday, September 19, 2011 - link

You're missing the point. They aren't trying to beef the power of the CPU. CPUs are already quite powerful for most tasks. They are trying to lower energy usage and sell en-mass to businesses that use thousands of computers.